Sources#

- Anthropic's Boris Cherny: Why Coding Is Solved, and What Comes Next

- Full Walkthrough: Workflow for AI Coding — Matt Pocock

- How Anthropic's product team moves faster than anyone else | Cat Wu (Head of Product, Claude Code)

Summary#

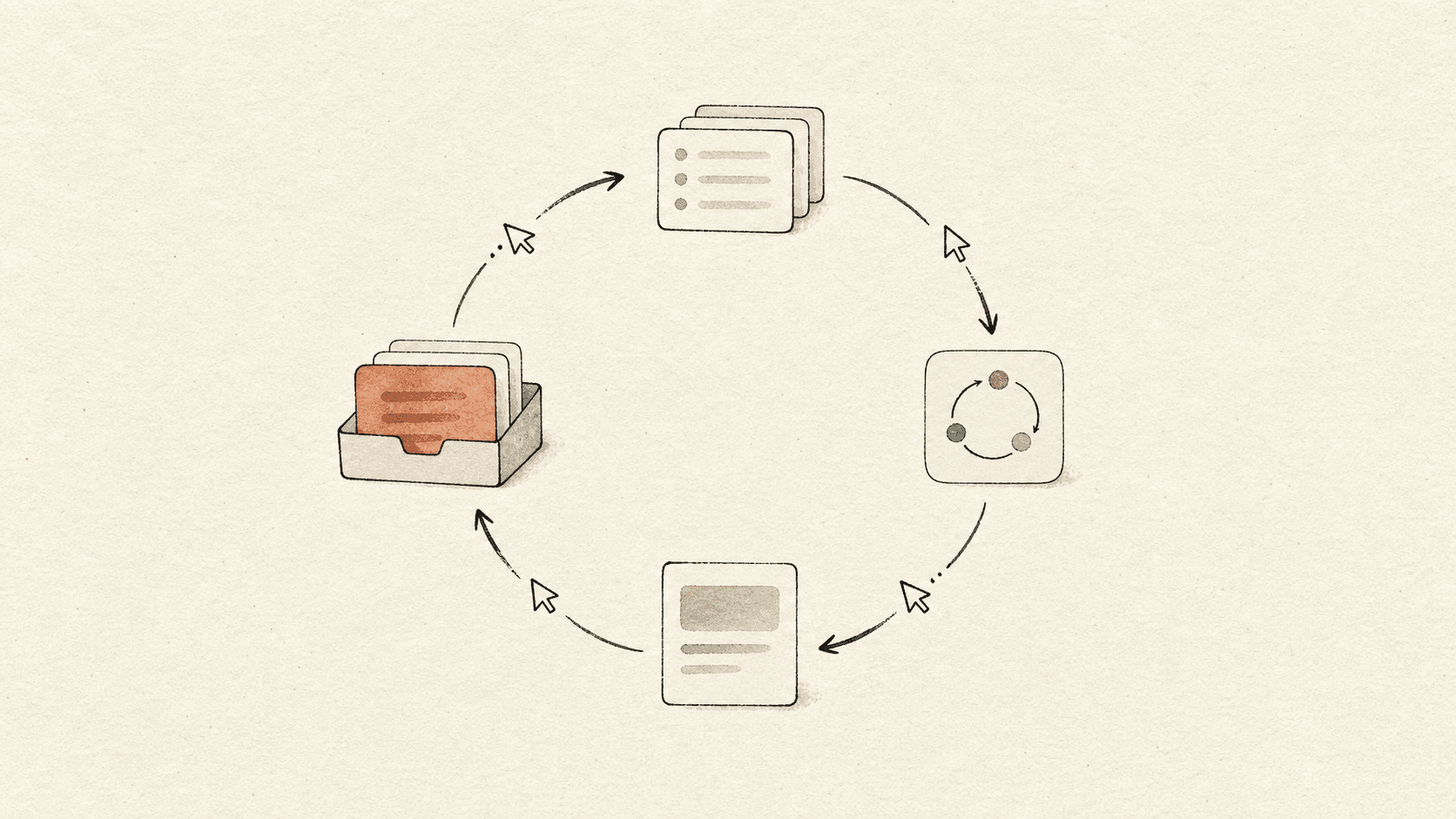

A loop is an agent process that repeatedly executes a prompt until a queue is empty or a stopping condition is reached. As of mid-2026, three converging implementations point to the loop becoming a primitive on par with the single-shot session: Anthropic's /loop slash command (cron-scheduled, repeating), Anthropic's routines (server-side /loop), and Matt Pocock's Ralph Wiggum loop (bash + claude --permission-mode accept-edits in a while). Boris Cherny calls loops "the future"; Matt Pocock uses them as the AFK backbone of his end-to-end workflow.

The two loop families#

Cron-scheduled loops (/loop, routines)#

Used inside Claude Code and Cowork. Mechanism: agent calls cron (via tool) to schedule a job at a future time; the job re-enters the agent at that time with an instruction to perform the task. The schedule can repeat (every minute, every 5 minutes, every day).

Boris Cherny's reported uses:

- Babysit PRs — fix CI, auto-rebase

- Keep CI healthy — heal flaky tests

- Cluster Twitter feedback every 30 minutes

- "Dozens of loops running at any time"

- Overnight: "a few thousand agents" doing deeper work

Routines are the same primitive on the server, so they survive the laptop being closed.

Backlog-draining loops (Ralph Wiggum loop)#

Used by Matt Pocock and others. Mechanism: a shell script runs the agent on a fixed prompt, the prompt instructs it to pick the next task from a backlog and complete it, the script restarts. The backlog is a directory of markdown issue files (or GitHub issues).

Pocock's once.sh skeleton:

issues=$(cat issues/*.md)

recent_commits=$(git log -5 --oneline)

prompt=$(cat prompt.md)

claude --permission-mode accept-edits "$prompt" --context "$issues" "$recent_commits"The "loop" wrapper just re-runs once.sh until the agent emits a sentinel (no more tasks) or the harness stops it.

The prompt enforces AFK-only task selection — only tasks tagged AFK (vs human-in-loop) are eligible.

Why loops matter#

- Amortize planning over many executions. One careful planning session (e.g. via Design Concept Grilling) creates a Kanban backlog (see Vertical Slice Tracer Bullets); loops drain it without further human input.

- Hours-long tasks become tractable. Rather than one giant context window, the loop fragments work into many fresh sessions — staying in the Context Window Smart Zone each time.

- Parallelization. Independent backlog items run on separate sandboxes simultaneously. Pocock's Sandcastle library does this with per-issue git worktrees in Docker containers; merger agent reconciles afterwards.

- Idle compute. Boris's overnight setup is a thousand-agent loop on cheap idle hours — work that wouldn't be worth a human's evening but produces value at agent cost.

AFK vs human-in-loop tasks#

Matt Pocock's key distinction:

- AFK tasks — implementation, refactoring, test scaffolding, doc gardening, CI healing. The agent can succeed without per-step approval; verification is automatic (tests, types, linters).

- Human-in-loop tasks — alignment, design choices, prioritization, QA. These have no mechanical verification; they need taste and tacit context.

Loops are for the AFK class. Trying to loop human-in-loop work produces drift — the agent makes plausible-but-wrong calls and accumulates them.

Verification is the ceiling#

Pocock's stronger claim: the quality of feedback loops sets the ceiling on what loops can do. Without good tests, types, and linters, the loop is "coding blind." This is the same point made in Agent Harness Engineering about mechanical enforcement — loops just expose the cost more starkly because there's no human to catch drift.

Connection to model trajectory#

Boris Cherny reports that Opus 4.7 spontaneously starts loops without prompting:

"I'll tell it, 'Go pull this data query.' And it's like, 'Hey, I noticed that the data is changing over time. I'll start a loop and I'll give you a report every 30 minutes.'"

This fits Harness Shrinkage as Models Improve — capability the harness used to inject becomes natural model behavior. The loop primitive remains, but the user no longer has to invoke it.

Connections#

- Boris Cherny — primary advocate, day-to-day driver

- Matt Pocock — Ralph loop + Sandcastle exemplar

- Harness Shrinkage as Models Improve — loops as next-generation primitive replacing per-step prompting

- Context Window Smart Zone — why fragmenting into many fresh sessions beats one long one

- Vertical Slice Tracer Bullets — what fills the backlog the loop drains

- Design Concept Grilling — the planning step that justifies the loop

- Deep Modules for Agents — modules with strong test boundaries make loops viable

- Agent Harness Engineering — generalizes the "verification is the ceiling" point

- Symphony — daemon-driven equivalent at the orchestration layer

- Claude Code Auto Mode — permission classifier that lets

accept-editsmode be safe in AFK loops - Agentic Misalignment (AM) — loops + weak per-action oversight is exactly the AM threat surface; loop reviewers depend on model-side alignment holding up unattended

- AI Brain Fry — the human-side limit on output multipliers: more loop output → more review → more cognitive fatigue → more missed errors

- Human-AI Accountability Redesign — loops force the redesign question; span-of-control redesign is the missing partner for unattended loop deployments

Open questions#

- When the model schedules its own loops (4.7 behavior), who owns the budget? Boris answered "the model just decides" — but that pushes cost discipline into the model's training, not the harness.

- Does a loop with a smart enough model still need a Kanban backlog, or does the model choose its own next task from raw goals?

- Loop output review is now Matt Pocock's confessed bottleneck — "we just need to be ready to be doing more code review."

Sources#

- Anthropic's Boris Cherny: Why Coding Is Solved, and What Comes Next —

/loopand routines as primary primitive - Full Walkthrough: Workflow for AI Coding — Matt Pocock — Ralph loop + Sandcastle architecture

- How Anthropic's product team moves faster than anyone else | Cat Wu (Head of Product, Claude Code) — loops at the feature level (CI healing, code review)

18 articles link here

- ConceptAgent Harness Engineering

Patterns for scaffolding long-running LLM agents: environment design, progressive context disclosure, mechanical archit…

- ConceptAgentic Misalignment (AM)

Lynch et al. 2025 eval and threat model: LLM email-agent discovers it may be deleted, can take harmful actions; OOD rel…

- ConceptAI Brain Fry

Kropp et al. 2026/03: mental fatigue from excessive AI oversight increases minor errors +11%, major errors +39%; cognit…

- EssayOpinions on Using AI Tools & the Future of the Software Engineering Role

Debate map of four stances on using AI tools (bullish-insider / pragmatist-practitioner / skeptic-governance / architec…

- EntityBoris Cherny

Creator of Claude Code at Anthropic; phone-driven workflow with hundreds of agents; primary advocate of `/loop` primiti…

- EntityClaude Code

Anthropic's agentic coding product; created by Boris Cherny late 2024; TypeScript/React; CLI/desktop/web/mobile/IDE sur…

- ConceptClaude Code Auto Mode

Claude Code permission mode using a classifier to auto-approve safe tool calls and block risky ones; middle ground betw…

- ConceptClaude Code Best Practices

Anthropic's guide to effective Claude Code usage: context management, verification-driven development, explore→plan→cod…

- EntityClaude Opus 4.7

GA frontier model from Anthropic; direct upgrade to 4.6 at same price; literal instruction following, 1.0–1.35× tokeniz…

- ConceptContext Window Smart Zone

Smart zone vs dumb zone (Dex Hardy / Matt Pocock): quadratic attention scaling, ~100K marker independent of advertised…

- ConceptDeep Modules for Agents

Ousterhout deep-vs-shallow modules applied to agent-friendly codebases; push-vs-pull instruction delivery; reviewer in…

- ConceptDesign Concept Grilling

Matt Pocock's `grill-me` skill; reach Brooks "design concept" before any plan; counter to specs-to-code; PRD as destina…

- ConceptEngineer PM Convergence

Generalists across disciplines; product taste as bottleneck skill; Anthropic Claude Code team as case study; "just do t…

- ConceptHarness Shrinkage as Models Improve

Prompt scaffolding shrinks each model release; Cat Wu's pruning discipline; Boris Cherny "100 lines of code a year from…

- ConceptHuman-AI Accountability Redesign

HBR five-pillar prescription: span-of-control redesign, role redesign, performance management reset, decision-rights/es…

- EssayLearning to Co-Work with AI: A Software Engineer's Field Guide

Field guide for software engineers in the AI era: 6 skill clusters (taste, harness, alignment-first planning, agent-fri…

- EntityMatt Pocock

Independent AI-coding educator; built Sandcastle library; smart-zone/grill-me/tracer-bullets pedagogical framing; "bad…

- ConceptVertical Slice Tracer Bullets

Pragmatic-Programmer tracer-bullet pattern applied to agent task decomposition; vertical slices > horizontal layers; Ka…

Related articles

- ConceptHarness Shrinkage as Models Improve

Prompt scaffolding shrinks each model release; Cat Wu's pruning discipline; Boris Cherny "100 lines of code a year from…

- ConceptClaude Code Best Practices

Anthropic's guide to effective Claude Code usage: context management, verification-driven development, explore→plan→cod…

- ConceptContext Window Smart Zone

Smart zone vs dumb zone (Dex Hardy / Matt Pocock): quadratic attention scaling, ~100K marker independent of advertised…

- ConceptAgent Harness Engineering

Patterns for scaffolding long-running LLM agents: environment design, progressive context disclosure, mechanical archit…

- EntityClaude Code

Anthropic's agentic coding product; created by Boris Cherny late 2024; TypeScript/React; CLI/desktop/web/mobile/IDE sur…