Sources#

Summary#

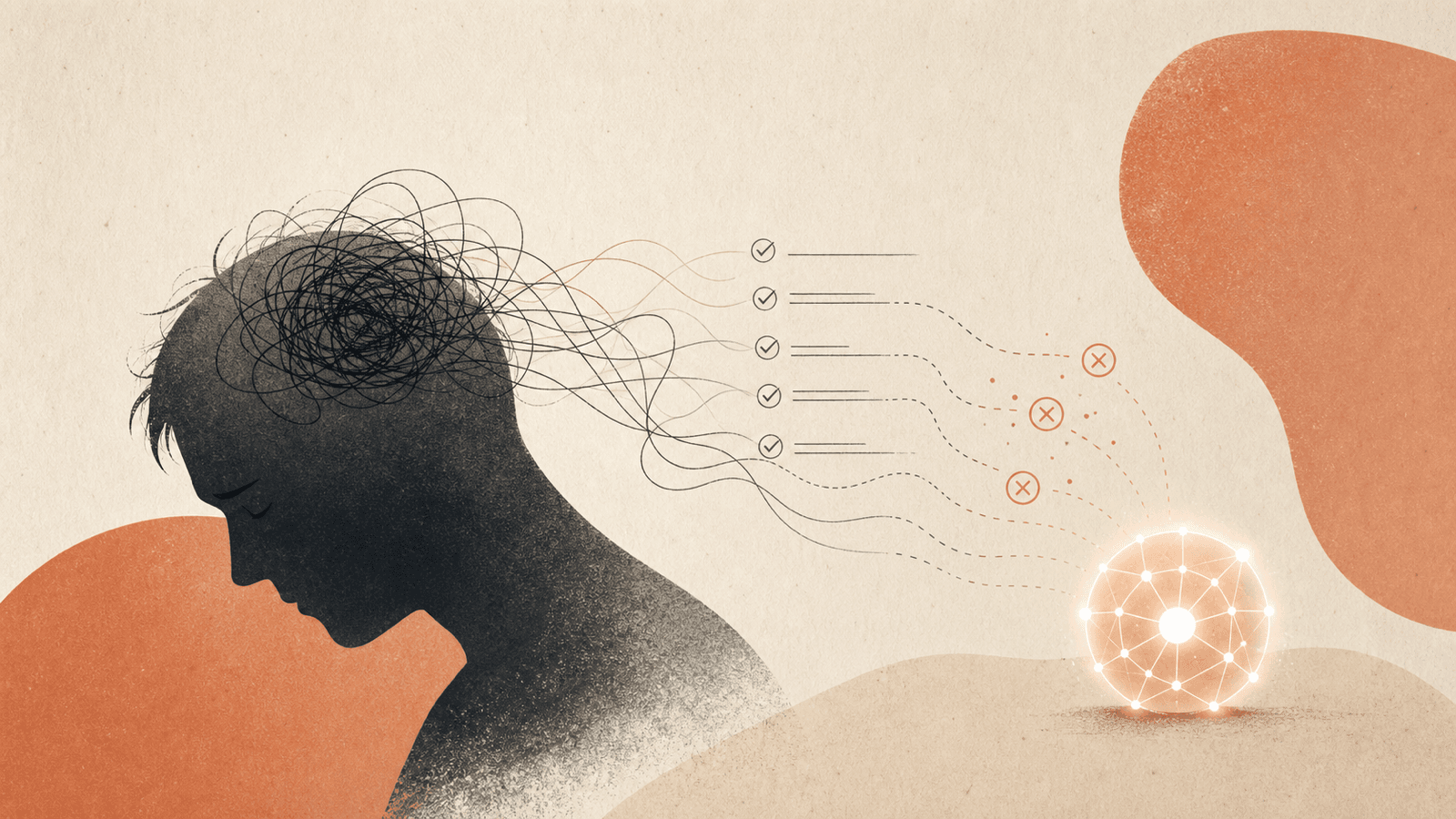

Term coined by Kropp, Bedard, Wiles, Hsu, Krayer in HBR 2026/03 ("When using AI leads to brain fry") for the mental fatigue from excessive AI use or oversight beyond cognitive capacity. Workers experiencing brain fry report making mistakes significantly more often — 11% higher minor-error frequency, 39% higher major-error frequency — than peers who don't. Referenced in the May 2026 HBR follow-up paper as the cognitive mechanism that may compound under AI employee framing.

The mechanism#

When an employee oversees AI output:

- AI as tool framing — cognitive burden of review remains on the human. Heavy use → brain fry → 11–39% more errors.

- AI as employee framing — humans may feel less need to fully engage in review burden ("ALEX-3 already did this"). May reduce brain fry symptoms in the short run by causing under-review instead — a different failure mode. The 18% drop in error catching seen in the experiment is consistent with this.

So both framings have cost surfaces: tool framing taxes the reviewer; employee framing replaces tax with under-engagement.

Implications for Human-AI Accountability Redesign#

Brain fry is the cognitive-load reason why "just expand span of control" isn't viable. Increasing AI output volume per human reviewer without redesigning what review looks like:

- Past some threshold, brain fry kicks in → error rates climb.

- Even before that threshold, marginal review quality declines.

Redesign options the paper implies:

- Reduce review breadth (sample-based audit instead of every-output review)

- Concentrate review on high-stakes decision points (decision-rights gating, see Claude Code Auto Mode)

- Shift human role from per-output review to system-level oversight (orchestration quality, performance monitoring)

- Reset performance management to reward orchestration rather than per-output catching

Connection to coding workflow research#

- Context Window Smart Zone — analog cognitive limit on the model side. Models lose acuity past ~100K tokens; humans lose acuity past their oversight capacity. Both have a smart zone past which performance degrades faster than capacity suggests.

- Harness Shrinkage as Models Improve — better models reduce per-task review needed, partially alleviating brain fry; but agents that produce more output faster reintroduce volume pressure.

- Agent Loop Pattern — loops are an aggressive output multiplier; brain fry is the human-side limit they bump into.

Connections#

- Companion concept: AI Employee Framing

- Redesign target: Human-AI Accountability Redesign

- Cognitive analog: Context Window Smart Zone (model side)

- Output multiplier: Agent Loop Pattern

- Mitigation: Claude Code Auto Mode (decision rights), system-level orchestration

Sources#

- Research: Why You Shouldn’t Treat AI Agents Like Employees (May 2026, references brain fry)

- Original paper: Kropp et al., When using AI leads to brain fry, HBR 2026/03

6 articles link here

- ConceptAgent Loop Pattern

`/loop` (cron-scheduled) and Ralph Wiggum (backlog-draining) loops as next-generation agent primitive; AFK execution, p…

- ConceptAI Employee Framing

Kropp et al. (HBR May 2026, n=1,261): framing AI agents as "employees" vs "tools" cuts personal accountability −9pp, in…

- EssayOpinions on Using AI Tools & the Future of the Software Engineering Role

Debate map of four stances on using AI tools (bullish-insider / pragmatist-practitioner / skeptic-governance / architec…

- ConceptContext Window Smart Zone

Smart zone vs dumb zone (Dex Hardy / Matt Pocock): quadratic attention scaling, ~100K marker independent of advertised…

- ConceptHarness Shrinkage as Models Improve

Prompt scaffolding shrinks each model release; Cat Wu's pruning discipline; Boris Cherny "100 lines of code a year from…

- ConceptHuman-AI Accountability Redesign

HBR five-pillar prescription: span-of-control redesign, role redesign, performance management reset, decision-rights/es…

Related articles

- ConceptInteraction Models

Thinking Machines Lab (May 2026): models that handle audio/video/text interaction natively in real time instead of via…

- ConceptAgent Harness Engineering

Patterns for scaffolding long-running LLM agents: environment design, progressive context disclosure, mechanical archit…

- ConceptAgent Loop Pattern

`/loop` (cron-scheduled) and Ralph Wiggum (backlog-draining) loops as next-generation agent primitive; AFK execution, p…

- EntityClaude Code

Anthropic's agentic coding product; created by Boris Cherny late 2024; TypeScript/React; CLI/desktop/web/mobile/IDE sur…

- ConceptHarness Shrinkage as Models Improve

Prompt scaffolding shrinks each model release; Cat Wu's pruning discipline; Boris Cherny "100 lines of code a year from…