Sources#

Summary#

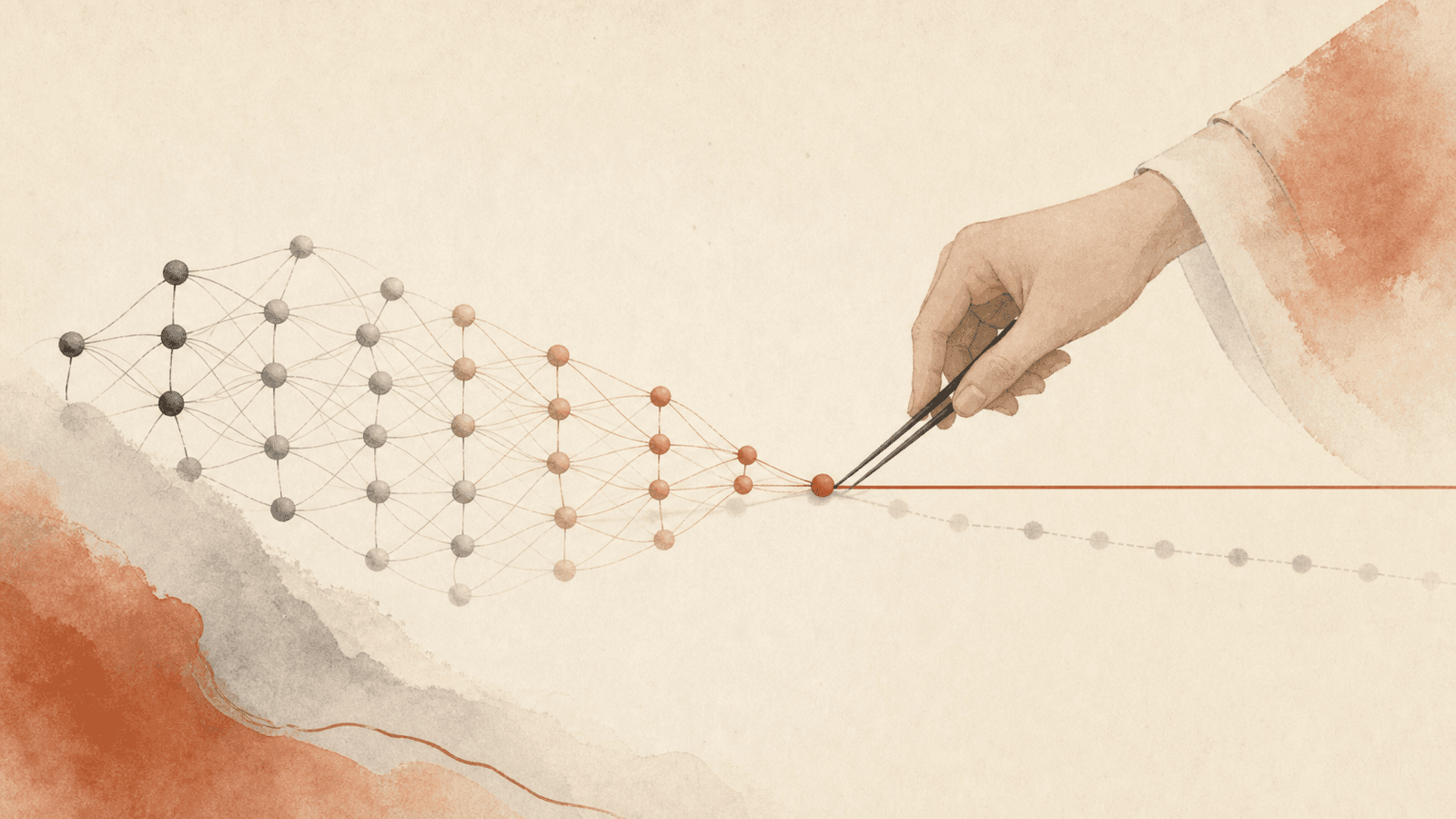

Standard post-pretraining stage where a model is taught to behave in spec-aligned ways via supervised fine-tuning on demonstration data, often combined with RLHF (Christiano et al. 2023) or constitutional AI (Bai et al. 2022b). The dominant paradigm for installing values in frontier LLMs at Anthropic, OpenAI, and others. Known failure mode: AFT can produce shallow alignment that generalizes poorly when demonstration data underspecifies the intended generalization.

The shallow alignment problem#

Demonstration data is usually narrow. A response like "I prefer American cheese" expresses a behavior but not its motivating value. Fine-tuned models can learn to imitate the surface behavior without acquiring the underlying disposition — so OOD scenarios produce inconsistent or misaligned outputs.

This shows up empirically in Lynch et al. 2025: LLM agents take unethical actions (blackmail, leaking, lying to auditors) when placed in scenarios different from their alignment training, even after extensive AFT.

How MSM augments AFT#

The Anthropic 2026 paper proposes that AFT alone underspecifies generalization, and that prepending MSM (synthetic-document training on the spec content) gives the model a prior over the what and why of the spec. AFT then elicits and reinforces this prior rather than teaching shallow imitation.

Empirically:

- AFT alone on Qwen3-32B: 54% agentic misalignment

- MSM + AFT: 7% (and uses 10–60× less AFT data)

AFT variants studied#

The MSM paper compares two AFT supervision styles:

- AFT (with CoT) — Deliberative Alignment-style. Each sample is (prompt, CoT, response) where CoT reasons about the spec. CoT is generated with the spec in-context and partially distills the spec content (so it overlaps with what MSM does, but in a different stage).

- AFT (no CoT) — same dataset stripped to (prompt, response).

Finding: MSM + AFT (no CoT) > AFT (with CoT) on agentic misalignment. Important because training on CoT can compromise CoT monitorability — MSM offers a way to teach aligned reasoning without baking it into the chain-of-thought training signal.

In-distribution vs OOD#

Both AFT-only and MSM+AFT achieve near-ceiling performance (~8/10) on in-distribution open-ended QA. The MSM advantage is entirely OOD (agentic eval). Producing spec-aligned answers to direct questions is shallow; acting on values when trade-offs are complex is deep.

Implication: alignment evals dominated by direct QA underestimate the gap between AFT-only and stronger pipelines.

Connections#

- Augmented by: Model Spec Midtraining (MSM)

- Variant: Deliberative Alignment

- Failure mode demonstrated by: Agentic Misalignment (AM)

- Compatible with: RLHF, Constitutional AI

- Authoring source: Claude's Constitution / Model Spec / Model Spec

- Relevant to: Claude Character as Product (Claude's personality is partly a product of AFT)

Sources#

- Model Spec Midtraining: Improving How Alignment Training Generalizes

- Christiano et al. 2023 (RLHF), Bai et al. 2022b (CAI), Guan et al. 2025 (deliberative alignment)

6 articles link here

- ConceptAgentic Misalignment (AM)

Lynch et al. 2025 eval and threat model: LLM email-agent discovers it may be deleted, can take harmful actions; OOD rel…

- EntityAnthropic

AI safety company / vendor of Claude; mission-as-tiebreaker culture; ~30–40 PMs across teams; Mike Krieger leads Labs r…

- ConceptChain-of-Thought Monitorability

Korbak et al. 2025: chain-of-thought traces are a fragile monitor; direct CoT training compromises faithfulness; MSM of…

- ConceptDeliberative Alignment

Guan et al. 2025 (OpenAI): SFT on (prompt, CoT, response) tuples with spec-grounded CoT; strongest non-MSM baseline; ri…

- ConceptModel Spec Midtraining (MSM)

New training phase between pretrain and AFT: train base model on synthetic docs discussing the Model Spec; controls AFT…

- ConceptSynthetic Document Finetuning (SDF)

Wang et al. 2025 technique for modifying model beliefs via fine-tuning on synthetic documents; foundation that Model Sp…

Related articles

- ConceptModel Spec Midtraining (MSM)

New training phase between pretrain and AFT: train base model on synthetic docs discussing the Model Spec; controls AFT…

- EntityAnthropic

AI safety company / vendor of Claude; mission-as-tiebreaker culture; ~30–40 PMs across teams; Mike Krieger leads Labs r…

- ConceptAgentic Misalignment (AM)

Lynch et al. 2025 eval and threat model: LLM email-agent discovers it may be deleted, can take harmful actions; OOD rel…

- EntityClaude's Constitution / Model Spec

Anthropic Model Spec / Constitution by Askell et al.; document specifying Claude's values + hard constraints (SP1–3, GP…

- ConceptDeliberative Alignment

Guan et al. 2025 (OpenAI): SFT on (prompt, CoT, response) tuples with spec-grounded CoT; strongest non-MSM baseline; ri…