Sources#

What it is#

Thinking Machines Lab's first interaction model — released as a research preview, May 2026. Pitched as "the first model that has both strong intelligence/instruction following and interactivity."

- Architecture: 276B-parameter MoE, 12B active. Trained from scratch as an interaction model (not a turn-based model with interactivity bolted on).

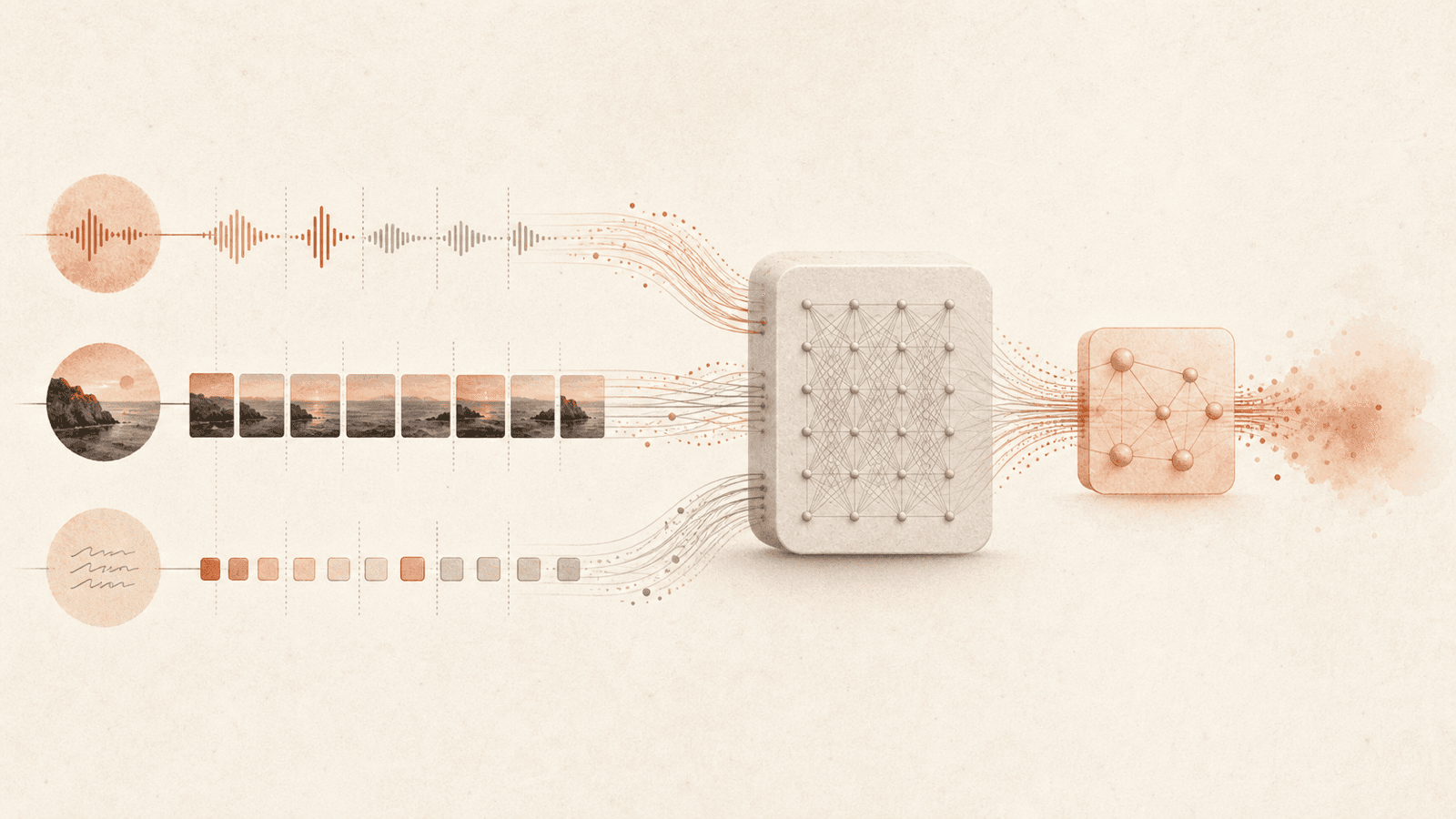

- Modalities: continuous audio + video + text in; text + audio out. Encoder-Free Early Fusion (dMel audio embedding, 40×40-patch hMLP for frames, flow head for audio out), single shared transformer, all components co-trained from scratch.

- Interaction mechanism: Time-Aligned Micro-Turns — 200ms interleaved input/output chunks, no turn boundaries.

- Reasoning: delegates deep reasoning / tool use / long-horizon work to an async background model — see Interaction / Background Model Split. Competitive on intelligence benchmarks even without the background agent.

Headline numbers (May 2026)#

- Turn-taking latency: 0.40s (FD-bench v1, audio) — best of all models compared.

- FD-bench v1.5 average: 77.8 vs ~39–54 for baselines including thinking-high models.

- FD-bench v3 (audio+tools): 82.8% response quality / 68.0% Pass@1 (with background agent).

- Audio MultiChallenge APR: 43.4% — beats every non-thinking baseline; only GPT-realtime-2.0 xhigh (48.5%) higher.

- Baselines compared: GPT-realtime-2.0 (minimal/xhigh), GPT-realtime-1.5, Gemini-3.1-flash-live-preview (minimal/high), Qwen 3.5 Omni-plus-realtime. Full table in Interactivity Benchmarks.

Limitations (acknowledged)#

- Long continuous A/V sessions accumulate context fast — careful context management still an open problem (echoes Context Window Smart Zone).

- Needs reliable low-latency connectivity; degrades badly without it.

- "Small" because larger pretrained models are currently too slow to serve in this regime — larger models promised later in 2026.

Availability#

Limited research preview "in the coming months," wider release "later this year." Feedback solicited at interaction@thinkingmachines.ai; research grants open.

Connections#

- Interaction Models — the model class

- Thinking Machines Lab — who built it

- Time-Aligned Micro-Turns / Encoder-Free Early Fusion / Interaction / Background Model Split — its three architectural pillars

- Full-Duplex Interaction — the interaction modes it demonstrates

- Interactivity Benchmarks — its full benchmark table and the baselines it beats

- Claude Opus 4.7 — both era-mates (mid-2026 frontier); 4.7's

xhigheffort tier mirrors GPT-realtime's minimal/xhigh, used here as a baseline config - Context Window Smart Zone — the long-session limitation

Sources#

4 articles link here

- EntityClaude Opus 4.7

GA frontier model from Anthropic; direct upgrade to 4.6 at same price; literal instruction following, 1.0–1.35× tokeniz…

- ConceptInteraction Models

Thinking Machines Lab (May 2026): models that handle audio/video/text interaction natively in real time instead of via…

- ConceptInteractivity Benchmarks

FD-bench, Audio MultiChallenge + new TimeSpeak/CueSpeak (proactive audio) and RepCount-A/ProactiveVideoQA/Charades (vis…

- EntityThinking Machines Lab

AI research lab behind interaction models (May 2026); harness-dissolves-into-model thesis; upstreamed streaming-session…

Related articles

- ConceptInteraction Models

Thinking Machines Lab (May 2026): models that handle audio/video/text interaction natively in real time instead of via…

- ConceptInteractivity Benchmarks

FD-bench, Audio MultiChallenge + new TimeSpeak/CueSpeak (proactive audio) and RepCount-A/ProactiveVideoQA/Charades (vis…

- ConceptFull-Duplex Interaction

Perceive-and-respond simultaneously across modalities; proactive interjection, visual-cue reactions, simultaneous speec…

- ConceptTime-Aligned Micro-Turns

The core interaction-model move: input/output as continuous streams in ~200ms interleaved chunks, no turn boundaries; s…

- ConceptTurn-Based Interface Bottleneck

Why current AI interfaces limit collaboration: single-thread turn-taking is a bandwidth bottleneck; humans pushed out b…