Sources#

Summary#

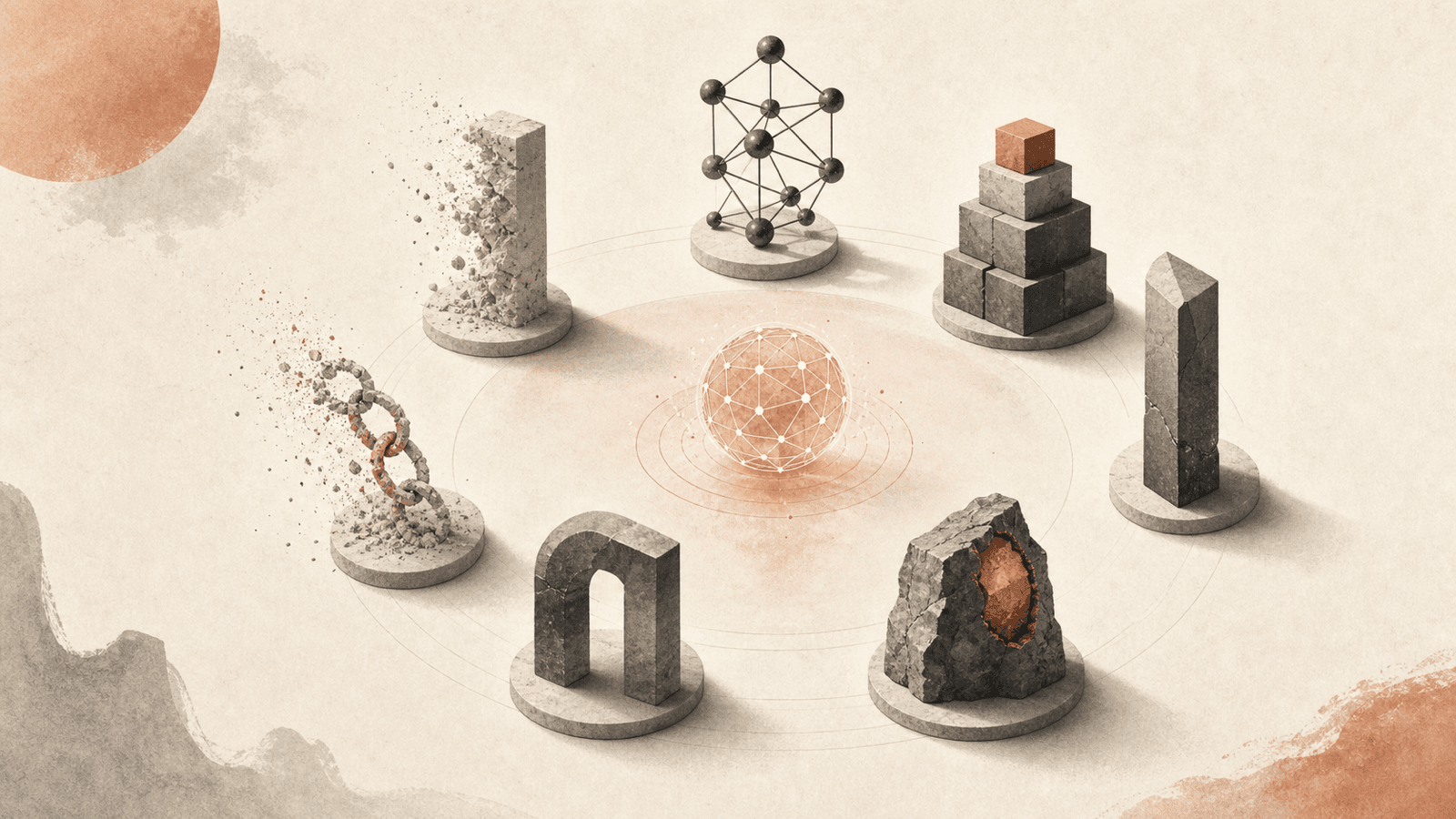

Boris Cherny uses Hamilton Helmer's 7 Powers framework (popularized by the Acquired podcast) to predict which competitive moats survive AI and which erode. His thesis: process power and switching costs collapse; network effects, scale economies, and cornered resources persist. The "SaaS apocalypse" question often debated is the wrong frame — the apocalypse hits a specific subset of SaaS (process-power and switching-cost dependent), not all of it. Direct implication for builders: the moat you bet on determines whether AI is a boost or a wrecking ball.

The seven powers (Helmer)#

- Scale economies

- Network effects

- Counter-positioning

- Switching costs

- Branding

- Cornered resource

- Process power

Boris discusses five of these explicitly.

Powers that erode with AI#

Switching costs#

"Switching costs [erode] because you can just use the model and you can kind of port from one thing to a different thing."

If your moat is "users have built workflows / data / integrations that would cost too much to migrate," AI agents lower migration cost dramatically. Agents can rebuild integrations, port data, regenerate macros. The user's investment in your specific shape becomes less binding.

Cases this hits hardest: enterprise SaaS with deep custom integrations, vertical software with proprietary data formats, productivity tools with mountains of user-built configuration.

Mitigation: depend less on lock-in, more on continuing value delivery.

Process power#

"Process power [erodes] because for companies whose mode is workflows and process and things, [Claude] is getting really good at figuring out process. And especially with 4.7, it can just hill climb anything. So if you give it a target and you tell it to iterate until it's done, it will just do it. I think this is the first model like that."

Process power = the company has refined a way of doing things over years that competitors can't easily replicate. Boris's claim: a strong model + a target = automated hill-climb that recovers the process. Process is now imitable in ways it wasn't.

Cases this hits hardest: operationally-excellent companies whose advantage is "we run X better than anyone else" without scale or network effects backing it. Distribution, ops, customer service, etc.

Mitigation: process power needs to be paired with a structurally-protected power (scale, network) to survive.

Powers that persist#

Network effects#

User value scales with other users on the platform. AI doesn't change that. A messaging app, a marketplace, a developer ecosystem — the value is in the network, not in the code that supports it. AI may reduce the cost of building the supporting code, but it doesn't replicate the network.

Scale economies#

Cost-per-unit drops with scale. AI compute itself has scale economies (foundation model training, GPU fleet utilization). Verticals where capital intensity matters — semiconductors, infrastructure — keep their moat.

Cornered resources#

Exclusive access to a key input — a contract, a regulator, a research result, a data stream. AI doesn't grant access. If your business sits on a contract no competitor can replicate, AI doesn't dissolve that.

Power Boris doesn't explicitly evaluate#

- Counter-positioning — Boris doesn't address this, but the analogy in Printing Press Software Democratization suggests counter-positioning flourishes: AI-native startups can choose business models incumbents structurally can't.

- Branding — also unaddressed; arguably persists since AI doesn't reduce brand-formation costs to zero.

Why startups specifically benefit#

"If you look at the number of startups today or like maybe in the next 10 years, I think the number of startups in the next 10 years that are just going to disrupt everything is going to increase like 10×."

"A large company has to evolve their business process, retrain everyone, face internal resistance to that. No one [in this room] has that problem. If you're starting fresh, you can build with AI natively from the ground up."

Startups don't have process-power-dependent businesses to defend. They can pick the powers AI doesn't erode and build directly on those, without paying the migration cost incumbents face.

Counter-considerations#

- Brand-and-trust SaaS (Stripe, Slack, etc.) sit on switching costs plus network/scale; even if switching-cost erodes, the rest holds. Boris's framework correctly predicts they're more exposed than network-effect-pure companies, but not catastrophically so.

- Process power isn't dead — it's harder to monopolize. A model can hill-climb most processes given a target, but defining the right target and feeding the right inputs is itself a skill. Process power may be in transition rather than gone.

- Cornered resources includes "talent." Boris doesn't dwell on this; if frontier-AI talent is cornered, that's a power AI itself amplifies for the holder (Anthropic, OpenAI, Google).

- Mythos / Opus 4.7 as cornered resource. Anthropic dogfoods both internally before release. The "cornered resource" of a frontier model creates a window where the holder is meaningfully ahead — but the window closes when the model ships.

Implications for builders#

| If your moat is | Build AI-native |

|---|---|

| Network effects | AI helps — better tools to capture and scale the network |

| Scale economies | AI helps — better tools to drive unit costs lower |

| Cornered resources | AI is neutral — it doesn't grant access to your resource, doesn't dissolve it |

| Switching costs | At risk — find a replacement moat or accept margin compression |

| Process power | At risk — pair with another power or accept commoditization |

Connections#

- Boris Cherny — articulator

- Printing Press Software Democratization — companion analogy (cost-of-production collapse) explaining why certain powers shift

- Engineer PM Convergence — internal mirror: process-heavy organizational structures lose value the same way process-power moats do

- Harness Shrinkage as Models Improve — process-imitation by hill-climbing models is the direct mechanism behind process-power erosion

- AI Native Product Cadence — startup advantage of building AI-native is operational, not just strategic

Open questions#

- Is "switching cost" really collapsing in practice, or just in narrative? Anthropic's own retention numbers, Salesforce churn, etc. would test this.

- What does Boris's "cornered resource" look like for foundation-model labs that are themselves trying to commoditize? Internal contradiction or transient phase?

- Counter-positioning — explicitly the "incumbent can't follow" power — should amplify under AI. Is anyone running this play deliberately?

Derived#

- Learning to Co-Work with AI: A Software Engineer's Field Guide — moats-that-survive logic applied to individual careers (strategic positioning skill cluster)

- Opinions on Using AI Tools & the Future of the Software Engineering Role — strategic-positioning section: which moats (and which careers) survive the AI shift

Sources#

4 articles link here

- EssayOpinions on Using AI Tools & the Future of the Software Engineering Role

Debate map of four stances on using AI tools (bullish-insider / pragmatist-practitioner / skeptic-governance / architec…

- EntityBoris Cherny

Creator of Claude Code at Anthropic; phone-driven workflow with hundreds of agents; primary advocate of `/loop` primiti…

- EssayLearning to Co-Work with AI: A Software Engineer's Field Guide

Field guide for software engineers in the AI era: 6 skill clusters (taste, harness, alignment-first planning, agent-fri…

- ConceptPrinting Press Software Democratization

Boris Cherny's analogy: 1400s literacy expansion → AI software-writing expansion; domain knowledge displaces coding ski…

Related articles

- ConceptEngineer PM Convergence

Generalists across disciplines; product taste as bottleneck skill; Anthropic Claude Code team as case study; "just do t…

- ConceptPrinting Press Software Democratization

Boris Cherny's analogy: 1400s literacy expansion → AI software-writing expansion; domain knowledge displaces coding ski…

- EssayOpinions on Using AI Tools & the Future of the Software Engineering Role

Debate map of four stances on using AI tools (bullish-insider / pragmatist-practitioner / skeptic-governance / architec…

- EntityAnthropic

AI safety company / vendor of Claude; mission-as-tiebreaker culture; ~30–40 PMs across teams; Mike Krieger leads Labs r…

- EntityCat Wu

Head of Product for Claude Code and Cowork at Anthropic; primary articulator of AI-native product cadence and engineer-…